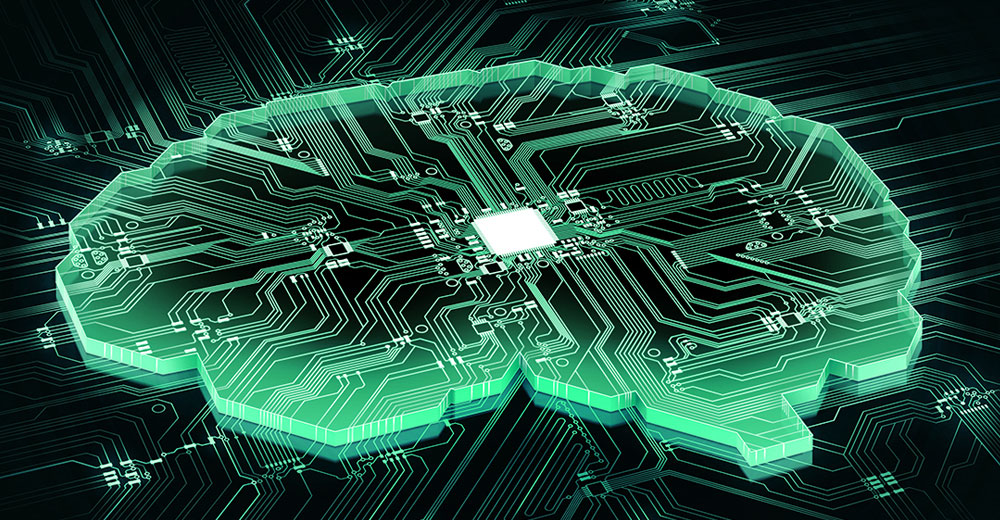

Startup chip developer Cerebras on Monday announced a breakthrough in high-speed processor design that will hasten the development of artificial intelligence technologies.

Cerebras unveiled the largest computer processing chip ever built. The new chip, dubbed “Wafer-Scale Engine” (WSE) — pronounced “wise” — is the heartbeat of the company’s deep learning machine built to power AI systems.

WSE reverses a chip industry trend of packing more computing power into smaller form-factor chips. Its massive size measures eight and a half inches on each side. By comparison, most chips fit on the tip of your finger and are no bigger than a centimeter per side.

The new chip’s surface contains 400,000 little computers, known as “cores,” with 1.2 trillion transistors. The largest graphics processing unit (GPU) is 815 mm2 and has 21.1 billion transistors.

The chip already is in use by some customers, and the company is taking orders, a Cerebras spokesperson said in comments provided to TechNewsWorld by company rep Kim Ziesemer.

“Chip size is profoundly important in AI, as big chips process information more quickly, producing answers in less time,” the spokesperson noted. The new chip technology took Cerebras three years to develop.

Bigger Is Better to Train AI

Reducing neural networks’ time to insight, or training time, allows researchers to test more ideas, use more data and solve new problems. Google, Facebook, OpenAI, Tencent, Baidu and many others have argued that the fundamental limitation of today’s AI is that it takes too long to train models, the Cerebras spokesperson explained, noting that “reducing training time thus removes a major bottleneck to industry-wide progress.”

Accelerating training using WSE technology enables researchers to train thousands of models in the time it previously took to train a single model. Moreover, WSE enables new and different models.

Those benefits result from the very large universe of trainable algorithms. The subset that works on GPUs is very small. WSE enables the exploration of new and different algorithms.

Training existing models at a fraction of the time and training new models to do previously impossible tasks will change the inference stage of artificial intelligence profoundly, the Cerebras spokesperson said.

Understanding Terminology

To put the anticipated advanced outcomes into perspective, it is essential to understand three concepts about neural networks:

- Training is learning;

- Inference is applying learning to tasks; and

- Inference is using learning to classify.

For example, you first must teach an algorithm what animals look like. This is training. Then you can show it a picture, and it can recognize a hyena. That is inference.

Enabling vastly faster training and new and improved models forever changes inference. Researchers will be able to pack more inference into smaller compute and enable more power-efficient compute to do exceptional inference.

This process is particularly important since most inference is done on machines that use batteries or that are in some other way power-constrained. So better training and new models enable more effective inference to be delivered from phones, GoPros, watches, cameras, cars, security cameras/CCTV, farm equipment, manufacturing equipment, personal digital assistants, hearing aids, water purifiers, and thousands of other devices, according to Cerebras.

The Cerebras Wafer Scale Engine is no doubt a huge feat for the advancement of artificial intelligence technology, noted Chris Jann, CEO of Medicus IT].

“This is a strong indicator that we are committed to the advancement of artificial intelligence — and, as such, AI’s presence will continue to increase in our lives,” he told TechNewsWorld. “I would expect this industry to continue to grow at an exponential rate as every new AI development continues to increase its demand.”

WSE Size Matters

Cerebras’ chip is 57 times the size of the leading chip from Nvidia, the “V100,” which dominates today’s AI. The new chip has more memory circuits than any other chip: 18 gigabytes, which is 3,000 times as much as the Nvidia part, according to Cerebras.

Chip companies long have sought a breakthrough in building a single chip the size of a silicon wafer. Cerebras appears to be the first to succeed with a commercially viable product.

Cerebras received about US$200 million from prominent venture capitalists to seed that accomplishment.

The new chip will spur the reinvention of artificial intelligence, suggested Cerebras CEO Andrew Feldman. It provides the parallel-processing speed that Google and others will need to build neural networks of unprecedented size.

It is hard to say just what kind of impact a company like Cerebras or its chips will have over the long term, said Charles King, principal analyst at Pund-IT.

“That’s partly because their technology is essentially new — meaning that they have to find willing partners and developers, let alone customers to sign on for the ride,” he told TechNewsWorld.

AI’s Rapid Expansion

Still, the cloud AI chipset market has been expanding rapidly, and the industry is seeing the emergence of a wide range of use cases powered by various AI models, according to Lian Jye Su, principal analyst at ABI Research.

“To address the diversity in use cases, many developers and end-users need to identify their own balance of the cost of infrastructure, power budge, chipset flexibility and scalability, as well as developer ecosystem,” he told TechNewsWorld.

In many cases, developers and end users adopt a hybrid approach in determining the right portfolio of cloud AI chipsets. Cerebras WSE is well-positioned to serve that segment, Su noted.

What WSE Offers

The new Cerebras technology addresses the two main challenges in deep learning workloads: computational power and data transmission. Its large silicon size provides more chip memory and processing cores, while its proprietary data communication fabric accelerates data transmission, explained Su.

With WSE, Cerebras Systems can focus on ecosystem building via its Cerebras Software Stack and be a key player in the cloud AI chipset industry, noted Su.

The AI process involves the following:

- Cerebras-built software tools for design; and

- building in redundant circuits to route around defects in silicon manufacturing in order to still deliver 400,000 working optimized cores, like a miniature Internet that keeps going when individual server computers go down;

- moving data in new ways for better training of a neural network that requires thousands of operations to happen in parallel at each moment in time.

The problem the larger WSE chip solves is computers with multiple chips slowing down when sending data between the chips over the slower wires linking them on a circuit board.

The wafers were produced in partnership with Taiwan Semiconductor Manufacturing, the world’s largest chip manufacturer, but Cerebras has exclusive rights to the intellectual property that makes the process possible.

Available Now But …

Cerebras will not sell the chip on its own. Instead, the company will package it as part of a computer appliance Cerebras designed.

A complex system of water-cooling — an irrigation network — is necessary to counteract the extreme heat the new chip generates running at 15 kilowatts of power.

The Cerebras computer will be 150 times as powerful as a server with multiple Nvidia chips, at a fraction of the power consumption and a fraction of the physical space required in a server rack, Feldman said. That will make neural training tasks that cost tens of thousands of dollars to run in cloud computing facilities an order of magnitude less costly.